Robbyant Introduces Open-Source LingBot-Map for Real-Time 3D Reconstruction Using RGB Cameras

Ant Group’s embodied AI unit introduces a breakthrough streaming 3D mapping model that delivers high-precision, real-time spatial understanding for robotics, AR, and autonomous systems.

Image Courtesy: Public Domain

Robbyant, the embodied AI company within Ant Group, announced the open-sourcing of LingBot-Map, a new streaming 3D reconstruction model. This innovative technology empowers robots, autonomous vehicles, and AR devices to perceive and understand their three-dimensional surroundings in real-time using only a standard RGB camera.

Unlike traditional 3D reconstruction methods that process a complete set of images offline, LingBot-Map operates on a "see-as-you-go" principle. It continuously estimates the camera's position and reconstructs the scene's 3D structure frame-by-frame as video is captured.

LingBot-Map sets a new benchmark for accuracy in the field. On the Oxford Spires dataset, known for its large scale and challenging lighting conditions, the model achieved an Absolute Trajectory Error (ATE) of just 6.42 meters. This represents a remarkable near 2.8x improvement in trajectory accuracy over the previous best streaming method and significantly outperforms offline methods like DA3 (12.87 meters) and VIPE (10.52 meters).

The model's superiority extends to other major benchmarks, including ETH3D, 7-Scenes, and Tanks and Temples, where it leads in both pose estimation and 3D reconstruction quality. On the ETH3D benchmark, LingBot-Map achieved a reconstruction F1 score of 98.98, more than 21 percentage points higher than the second-place method.

Beyond precision, LingBot-Map also achieves both real-time performance and long-term stability. The model achieves an inference speed of approximately 20 FPS and supports continuous inference on long video sequences exceeding 10,000 frames with almost unchanged accuracy. This capability is fundamental for applications requiring continuous, online spatial awareness, such as robot navigation, obstacle avoidance, and complex object manipulation.

The core challenge in streaming 3D reconstruction lies in balancing geometric accuracy, temporal consistency, and computational efficiency. LingBot-Map addresses this through a novel pure auto-regressive modeling approach built on a Geometric Context Transformer.

The model's key innovation is its Geometric Context Attention (GCA) mechanism, which efficiently organizes and utilizes geometric information across frames, allowing the model to retain crucial historical context while minimizing redundant computation. Inspired by the hierarchical information management of classic SLAM systems, LingBot-Map's architecture effectively leverages a unified model to handle tasks that traditionally require complex, hand-crafted design and optimization.

The launch of LingBot-Map marks a new step in Robbyant's mission to build a comprehensive intelligent foundation for embodied AI. It follows the recent open-sourcing of several other major models:

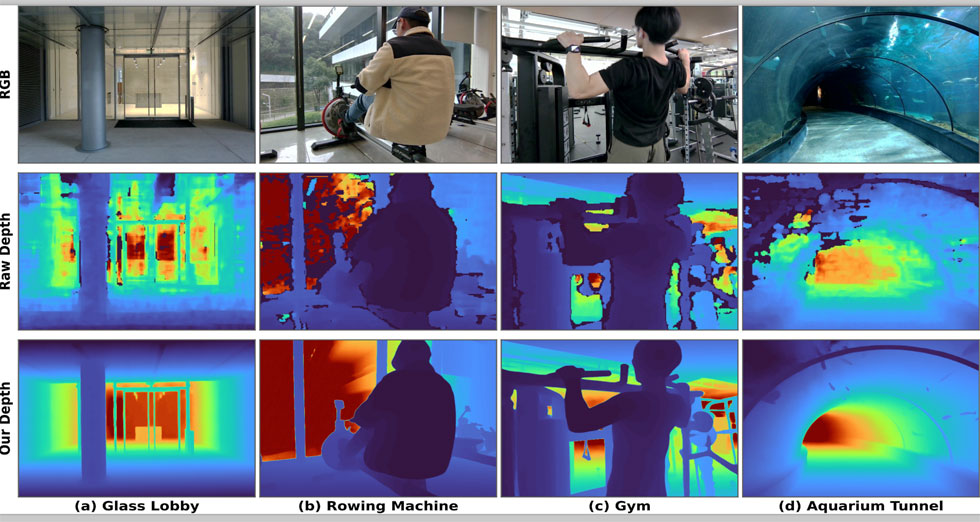

- LingBot-Depth: A high-precision spatial perception model.

- LingBot-VLA: A general-purpose Vision-Language-Action model.

- LingBot-World: A world model for environmental simulation.

- LingBot-VA: An auto-regressive video-action model for robot control.

With LingBot-Map, Robbyant has further strengthened its technology stack, providing a robust solution for real-time spatial understanding and online 3D mapping.